What is Project Vault

You can read a quick overview on various news sites, but basically project vault gives you a cryptographic module that you have complete control over. This means *you* decide to trust the module – even to the point of being able to access to implementation details of the crypto cores.

Basically Project Vault is a solution to how you can avoid having unknown backdoors in your hardware. Rather than having to trust some vendor of security modules, you can make sure things are done correctly.

About the AES Core

The crypto modules have a nice description, which you can read here. Of interest to us is this statement:

The AES core can encrypt and decrypt blocks of data in three modes of operation: AES-128, AES-192, and AES-256. The design is based upon a purely gate logic implementation of the forward and reverse sboxes due to the work of Boyar and Peralta. This avoids differential power attacks present in purely lookup table or SRAM based sbox implementation.

The paper in question isn’t written by Andy Samberg’s character on Brooklyn Nine-Nine, but instead is referencing A small depth-16 circuit for the AES S-Box (that link is the unpaywalled version, the paper was published in the SEC 2012 proceedings).

This is a problematic statement, as side-channel power leakage isn’t just one simple fix. In this case there is effectively no difference from an unprotected implementation for side-channel power analysis. More on that in a moment.

Side-Channel Power Analysis

It’s worth pointing out I’m looking at a single small part of the entire device. There may be additional protocol-layer protection that would significantly complicated the analysis I perform, I just have no idea as haven’t looked into that.

Realistically, side-channel power analysis might be a threat. Having a leaking core on it’s own might be impossible/very difficult to exploit due to use-cases. But it might form part of a larger attack (i.e. someone is able to take control of the core using a different attack method).

Side-channel power analysis (or Differential Power Analysis, called DPA) also requires the device is operating with the key we are using. You cannot use DPA on an encrypted hard drive sitting on the table for example – you could only use it to recover the encryption key as the drive is decrypting/encrypting something. If the encryption key comes from the user, this means DPA is useless against an encrypted drive you recovered from someone.

Because of these caveats I like to stress this isn’t some master attack. In fact the only thing that makes it noteworthy is the documentation claimed some level of DPA resistance. Anyway on with the attack…

Attack Theory

DPA attacks are based on small power measurements being correlated with either data values or changes in data. In the referenced paper from earlier, the DPA attack being prevented is that the input and output of the S-Box are never put onto the same register.

This means we could never see the difference in number of bits flipped from input to output of the S-Box. Thus the power analysis attack on the S-Box itself would fail, which is normally where an easy leak to stop is. But it’s not the only way.

Looking at the source code, we see the following Verilog lines during the encryption (similar lines for decryption):

begin

state <= state_new;

if(round == round_max)

beginS

data_o <= state_new;

busy_o <= 0;

end

end

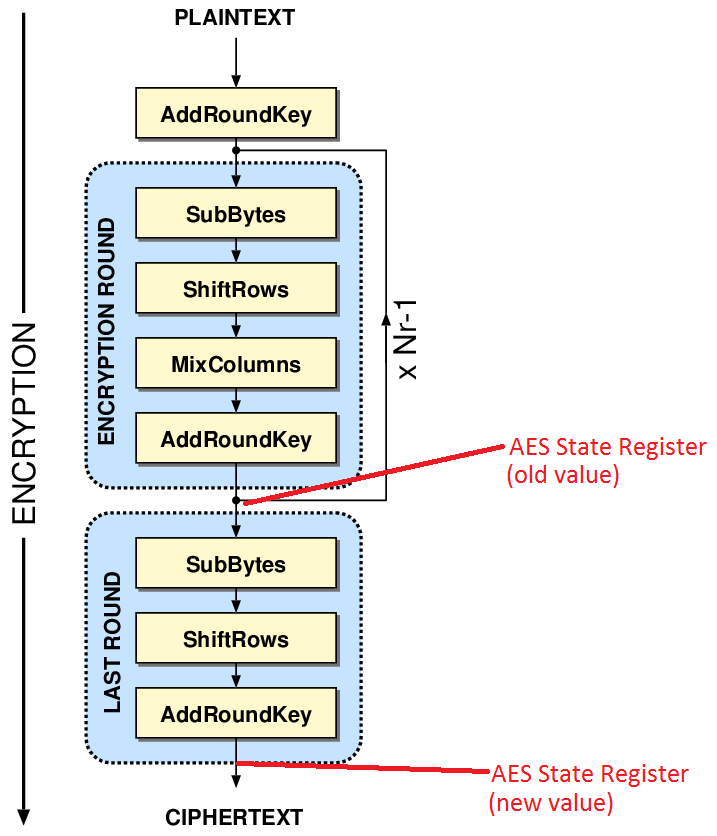

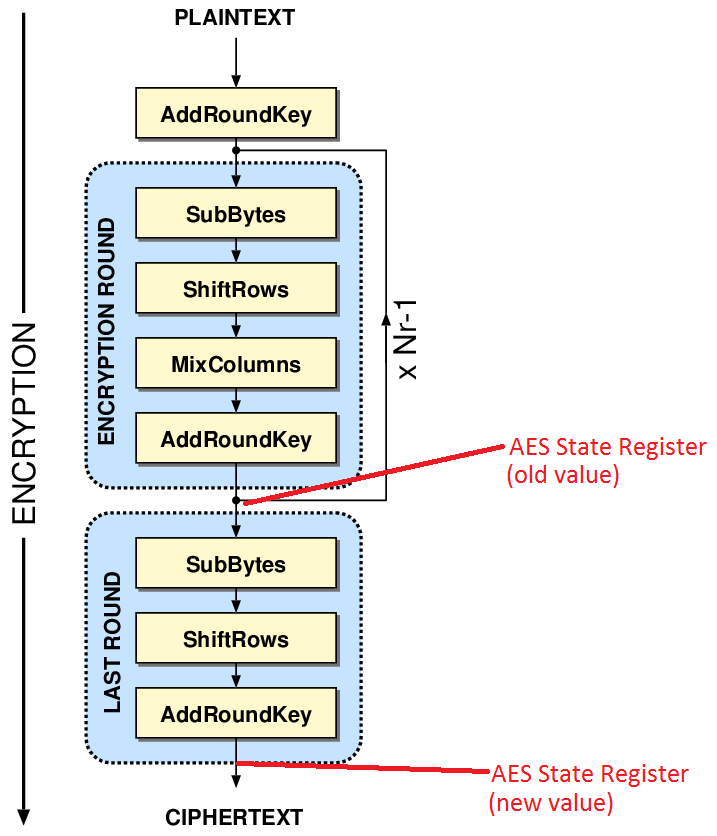

This is problematic, as the 128-bit AES state is held in a register. That register is overwritten on each round. In particular, looking at the last round (this figure based on one shamelessly stolen from Frank Gürkaynak’s Thesis), note the “old value” to “new value”:

The ShiftRows is easily reversed (it’s just swapping around the location of bytes). This in fact means the input and output of the S-Box is effectively written into the same register, giving as an easy way to count bit flips (Hamming Distance). We can correlate expected number of bit transitions with measured power as in a standard DPA attack.

Attack Test

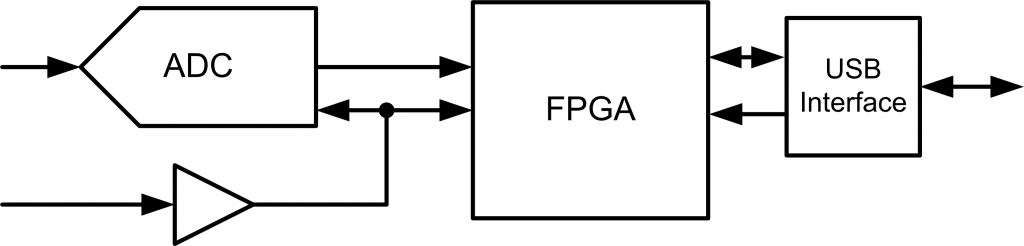

While it’s not really needed to test this in theory, nobody believes hand-waving. So I used a SAKURA-G FPGA board (Spartan 6 LX75) with my OpenADC and ChipWhisperer software, as I happen to have it around:

You could easily use my ChipWhisperer-Lite with any other FPGA board instead of the SAKURA-G. The SAKURA-G makes power measurement easier, otherwise you can use some H-Field probes etc.

There’s not a lot to this – I ripped out just the AES core (i.e. everything in this directory in the GIT). It’s easy to interface to the existing FPGA code given with the SAKURA-G, as the interface is almost exactly the same (key in, block in, block out, clk, go command).

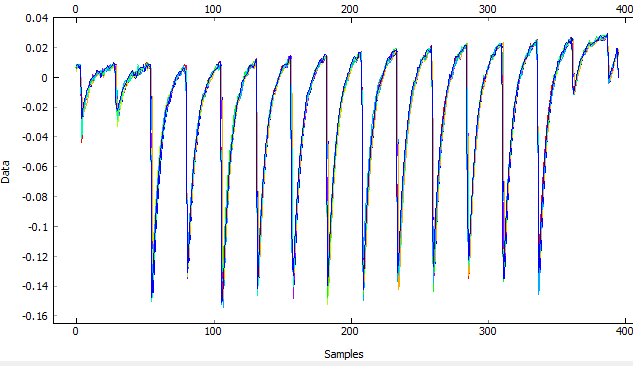

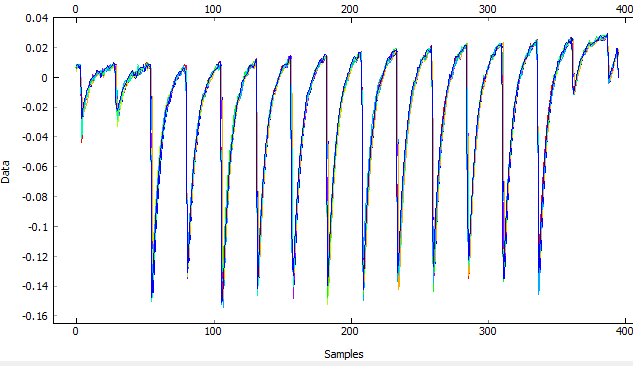

There was a few cycles of synchronization error for some reason, but I used a “resync by sum of absolute difference” in my ChipWhisperer software. Here is what the raw power traces look like after resync:

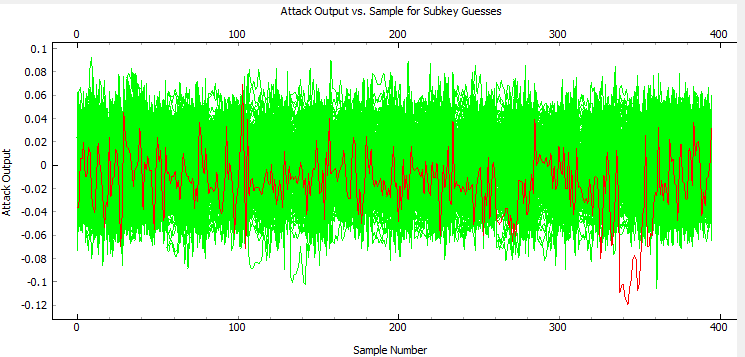

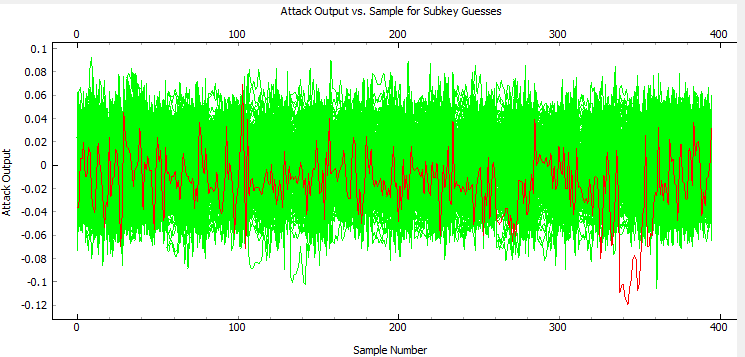

Running an attack targeting the last round-state difference of AES gives us a nice figure where the known encryption key bytes (in red) are filtering to the top of the “most likely encryption keys”, here with 2000 traces:

You can check where the leakage is occurring too. In the following figure the “correct” byte value is highlighted in red. You can see around sample ~342 there is the largest absolute peak of that correlation value, and it rises about all the wrong guesses. This corresponds to around the last round (based on power dips in earlier waveform):

That’s it! It’s really a standard Hamming-Distance attack against AES. The special S-Box design didn’t make our life any harder for my attack. Again this was done in a controlled environment, so it’s quite possible there are higher-level protections that make this attack much much harder.

That’s it! It’s really a standard Hamming-Distance attack against AES. The special S-Box design didn’t make our life any harder for my attack. Again this was done in a controlled environment, so it’s quite possible there are higher-level protections that make this attack much much harder.

Considering the device will (presumably) only have the encryption keys loaded when the user is doing stuff, it’s a pretty small risk. An attacker would have to monitor the power while you are using the device to deduce your keys… and if they are that close, they might just try seducing you instead.